Understanding AMMs and Concentrated Liquidity

Concentrated Liquidity (CL) is arguably the biggest Decentralized Finance innovation of the last 2 years. It has revolutionised Automated Market Makers (AMMs) and is now making its way to NFTs.

Those that understand CL have a competitive edge in the market, profiting from those who don’t.

So (very simply) how does it work ?

First we need some background on liquidity pools, which are usually comprised of 2 assets. (Let’s call them asset X and asset Y.)

When you supply asset X you receive more of asset Y in exchange. This can be understood as “sell X to buy Y”.

When you add X and remove Y, the ratio between X and Y in the pool thus changes. If a liquidity pool starts with 50% X and 50% Y, an input of X in exchange for Y could leave us with 70% X and 30% Y in the pool. That ratio can be thought of as “the price of X denominated in Y”.

You’ve probably heard of the equation we use to express this, called a constant product invariant:

x * y = k

Because k remains constant, increasing X reduces Y to maintain the equation.

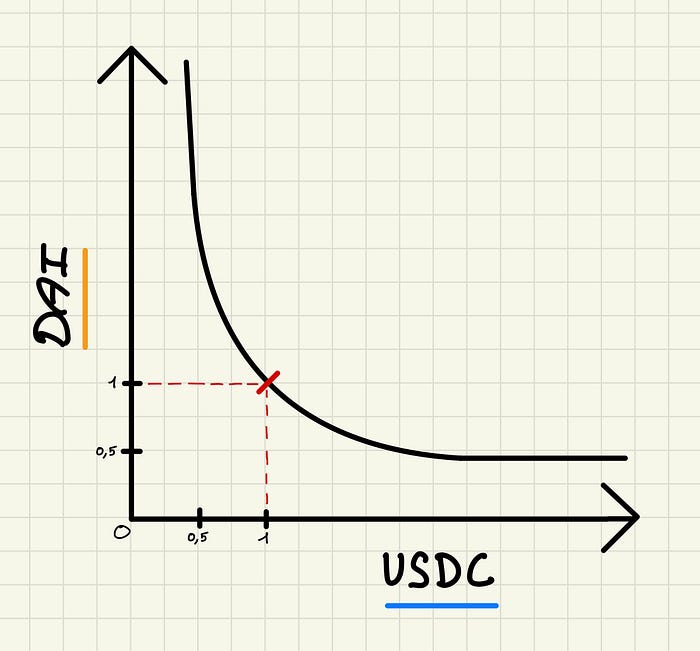

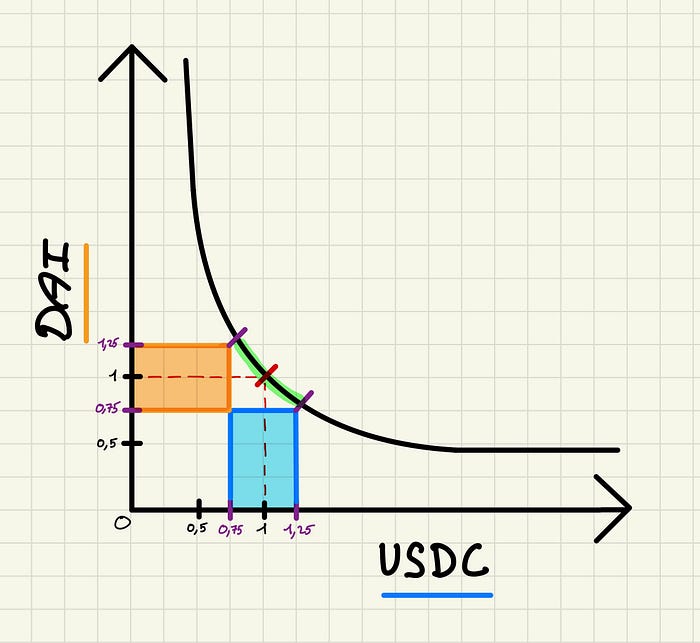

On this chart we have the x-axis as all the possible prices of $USDC, and the y-axis as all the possible prices of $DAI, which are two stablecoins that usually trade at $1. You’ll notice x and y both go from 0 until ∞.

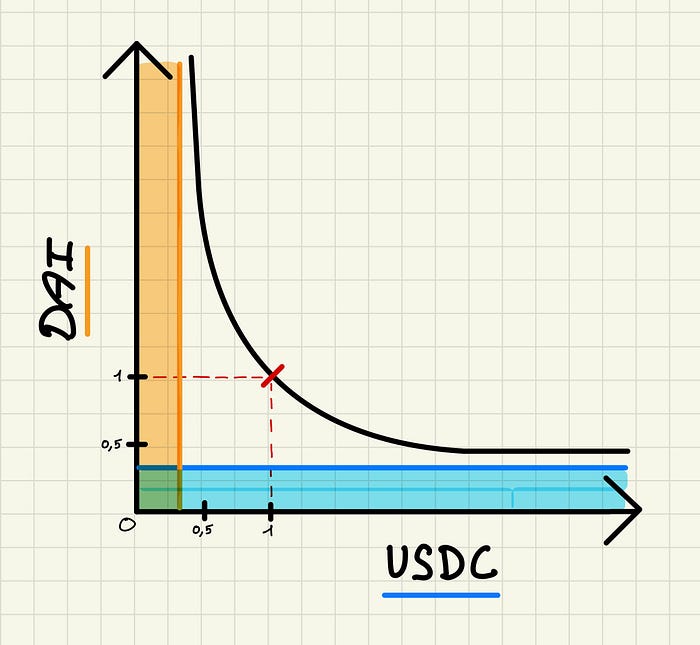

If we add liquidity evenly along this curve, as an AMM does, our liquidity is thus distributed between 0 and infinity, regardless of the price where the assets are currently trading. (as seen below).

This new chart shows the assets provided by liquidity providers. Highlighted in blue you’ll see the provided liquidity for USDC, and in orange the equivalent liquidity for DAI. You will notice the liquidity available for USDC between the prices of 0.5 and 1 are the same as the liquidity available between the prices of 1 and 1.5.

As trades happen, the ratio in the pool between X and Y is constantly changing. The back and forth relationship between these assets can be illustrated as a bonding curve, which you’ll notice in the middle of the chart. The curve helps us visualise how the price of X changes when Y goes up or down, and vice versa.

Why is this important ?

Only a small fraction of the total liquidity is actually being used at any given point on this bonding curve.

A popular example demonstrates that if USDC / DAI trades between 0.99$ and 1.01$ (as they often do) then only 0.5% of all the liquidity provided is actually being used.

This means there isn’t much liquidity being deployed at the price range where most of the trading is happening, making for high slippage and a poor trading experience.

You can imagine how inefficient this is, especially for stablecoins such as USDC and DAI, where all of the trading happens close to the 1$ range. Your liquidity allocated to 0.20 DAI per USDC is rarely, if ever, going to be utilised.

The price changes between USDC and DAI can be represented as a point on the curve. We can imagine this point doesn’t move much between USDC and DAI, since stablecoins trend very close to that 1$ mark.

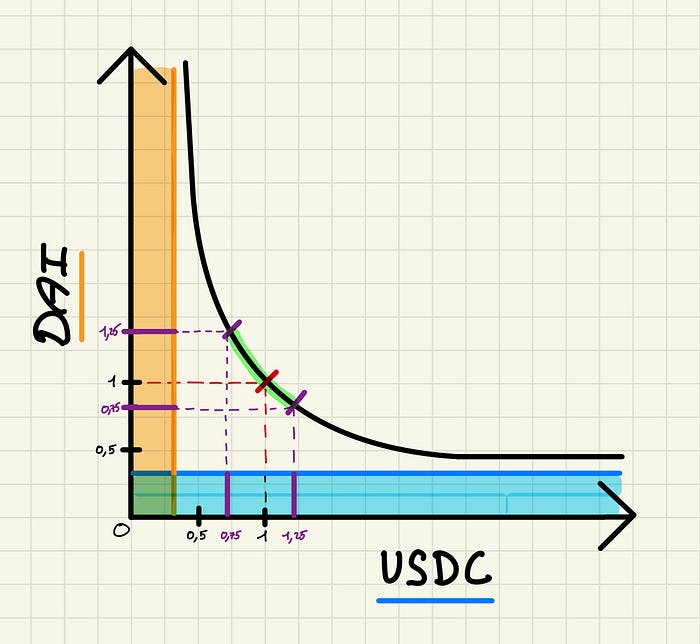

Let’s say DAI isn’t perfectly maintaining its 1$ value, and that it trades between 0.75 USDC and 1.25 USDC. Let’s assume the same thing about USDC’s price expressed in DAI.

On the chart above, you’ll see this trading range between 0.75 and and 1.25. That means all the trading is happening along the part of the curve highlighted in green.

If our assumption is right, and USDC / DAI hovers between 0.75 and 1.25, then the liquidity outside of that range (highlighted in purple below) is never used. It sits idle in a pool.

Worse, because traders never use it, that capital earns no trading fees for the liquidity providers.

While this example is based on USDC and DAI, we could say the same thing for BTC and ETH. If 1 BTC is worth around 14 ETH, the liquidity allocated for 1 BTC = 100 ETH is not being used any time soon. Any liquidity far enough removed from the current price is useless.

Allocating liquidity at every price point along an almost infinite range obviously looks like a terribly inefficient idea.

“By providing liquidity at these extreme prices, LPs are necessarily sacrificing potential liquidity at tighter pricing closer to the current price” — 0xmons

So why not use an order book ? Market makers could set up buy orders and sell orders for USDC worth ~0.9DAI to ~1.1 DAI, efficiently allocating liquidity where all the trades should be occurring.

AMMs vs Order books is a big debate with lots of disagreements, however we know order books would allocate liquidity more efficiently by only supplying liquidity to probable prices in a narrower range.

But DeFi is not snubbing order books. There is instead a historical / technical reason why we don’t use them as much.

Vitalik Buterin and the birth of AMMs

Blockchains like Ethereum can’t cheaply be used to run an orderbook, which needs to be constantly managed and readjusted based on volatility. As prices change due to trading, liquidity providers and market makers need to manually adjust the quotes of their bids / asks to reflect the new prices.

This is an old problem dating back to the early days of Ethereum. As Vitalik Buterin explained in a reddit post at the time:

“market making is very expensive, as creating an order and removing an order both take gas fees, even if the orders are never ‘finalized’ …”

Vitalik’s solution in his article was simple, and could be summed up as:

A * B = k

AMMs started as a constraint, not a choice. Protocols using orderbooks like dYdX had to process orders off-chain to be usable, making them less safe, while on-chain AMMs often quoted worse prices, leaving their liquidity providers to be the victims of arbitrage.

A quick recap

- On-chain order books were infeasible, terribly suited for illiquid and volatile assets

- The constant product curve mimics a market maker that distributes liquidity across an almost infinite range of potential prices

- At any given moment, trading activity between 2 pairs happens in a narrow price range

Uniswap V3 and the birth of Concentrated Liquidity

This was the state of DeFi until Uniswap V3, which sought to combine the granular control of an order book, with the automation and simplicity of an AMM.

The beauty of the AMM model, which we didn’t discuss earlier, is how passive it allows liquidity providers to be. When trading occurs on an AMM exchange, quotes for assets are automatically readjusted based on x*y=k. This is different to an order book, where liquidity providers need to constantly change the quotes on their bids / asks to follow the changing prices of the assets.

The result of an AMM, and surely a great part of Uniswap’s initial success, is the ability for investors to effortlessly make passive yield on their liquidity, by simply depositing assets into a pool.

But for the market to mature and to improve the experience for traders, we need the presence of more reactive liquidity providers, who can adjust quotes and liquidity in times of volatility.

Uniswap’s idea

The idea behind Uniswap V3 is simple, instead of allocating liquidity on an infinite price range, why not limit the range where liquidity is supplied.

Instead of x and y being variables defined between 0 and infinity, the variables become the sum of multiple price ranges. If you were to add up all the price ranges, it would be infinite, but liquidity can now be contained within the limits of these price ranges.

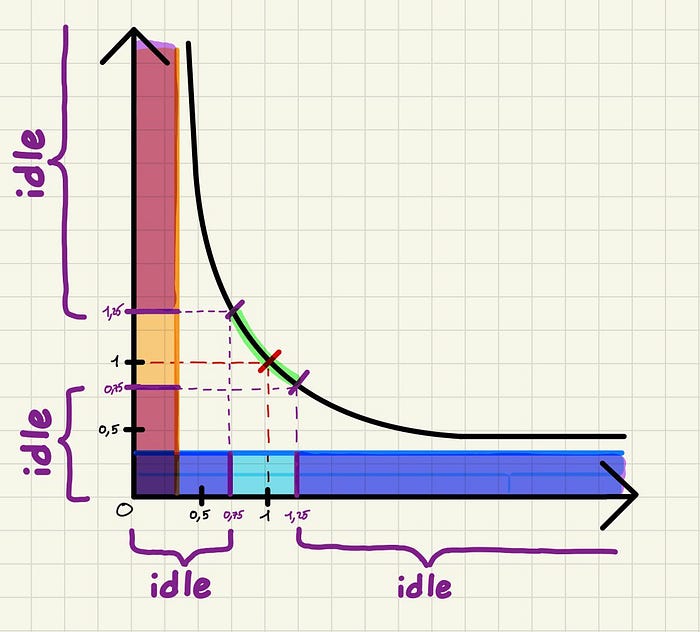

In our previous example, X and Y were allocated across an infinite range of potential prices. But what if instead we deployed this liquidity on Uniswap V3. We could now define the ranges of x and y on our chart.

Because we know that almost all of the trading for USDC / DAI happens between 0.75 and 1.25 USDC per DAI, we can decide to only allocate our liquidity in this tighter range.

Still highlighted (the areas in blue and orange) is the capital we’ve allocated per asset. As you can see, we’ve allocated significantly less capital, but have created much more depth at the 0.75 to 1.25 USDC / DAI price range.

Traders will benefit from having more liquidity in the market, despite us having deployed significantly less liquidity overall. This will enable larger trades with less volatility.

So how much more capital efficient is concentrated liquidity ?

That depends on how tightly liquidity providers set their desired price ranges.

The tighter the range in which liquidity is deployed, the more depth can be achieved with the same amount of capital.

Hayden Adams made a simple example to illustrate just how efficient concentrated liquidity truly is, where he found that the ETH/BTC trading pair of Uniswap V2 could benefit from a 10x improvement in capital efficiency by moving to V3, if liquidity providers selected the 3-month all time high and all time low as their price ranges.

This means by looking at a chart to see where the pair is trading over the past 3 months, liquidity providers only had to supply around 10% of the capital they would need to supply in a normal AMM, in order to make the same returns.

In general, only 25% of your liquidity is being used at +/- 50% of the current price of any asset. Meaning you could provide liquidity between the ranges of -50% of the current price and +50% of the current price (a very generous range) and have 4x more capital efficiency. If the price quoted in the pool doesn’t go beyond + or - 50%, then you should earn 4x the returns, or instead receive the same returns but with 4x less capital.

There is a limit to this…

You then enter a tradeoff of tight range with high liquidity vs broad range that captures more trading activity.

Trading and volatility naturally moves the price of an asset in and out of tight ranges, meaning liquidity is not always being used, and APRs aren’t guaranteed. This means concentrated liquidity is no longer passive liquidity providing.

Evolution of concentrated liquidity (1.5 years later)

So with all the improvements of concentrated liquidity, what has this meant for investors?

Like all things that require active management, different investors achieve different results based on both luck and competence.

As Bancor’s research team discovered, almost half of liquidity providers in Uniswap V3 lose money as opposed to passively holding their assets.

The reason for this is impermanent loss, made permanent when concentrated positions are readjusted. We don’t have time to cover impermanent loss today, all you need to know is it’s a form of opportunity cost, but many others have already done a great job explaining it. Protocols that attempt to manage liquidity on behalf of users face the same difficulties, and must now come up with ways to outperform the market.

So why hold concentrated positions if I lose money?

Well, the situation is not very different on Uniswap V2 and constant product AMMs. In many cases, providing liquidity to constant product AMMs results in more losses than simply holding the assets, it ultimately depends on market conditions and timing.

You have to pick your poison when providing liquidity, whether using v2 or v3 style AMMs, you stand to lose money either way if your strategy is flawed. But that’s a debate on active versus passive investing.

Conclusion

If anything, concentrated liquidity has showed the ingenuity of DeFi developers faced with the limitations of blockchain infrastructure

With more time, we can even hope that Uni V3 and its liquidity providers are able to adjust to the complexity of market making, so that we can fully reap the benefits of higher capital efficiency.

While perhaps a naive formula, the incredible complexity that can be achieved from the simple x*y=k invariant continues to build entire sub-industries within Decentralized Finance.